>

>

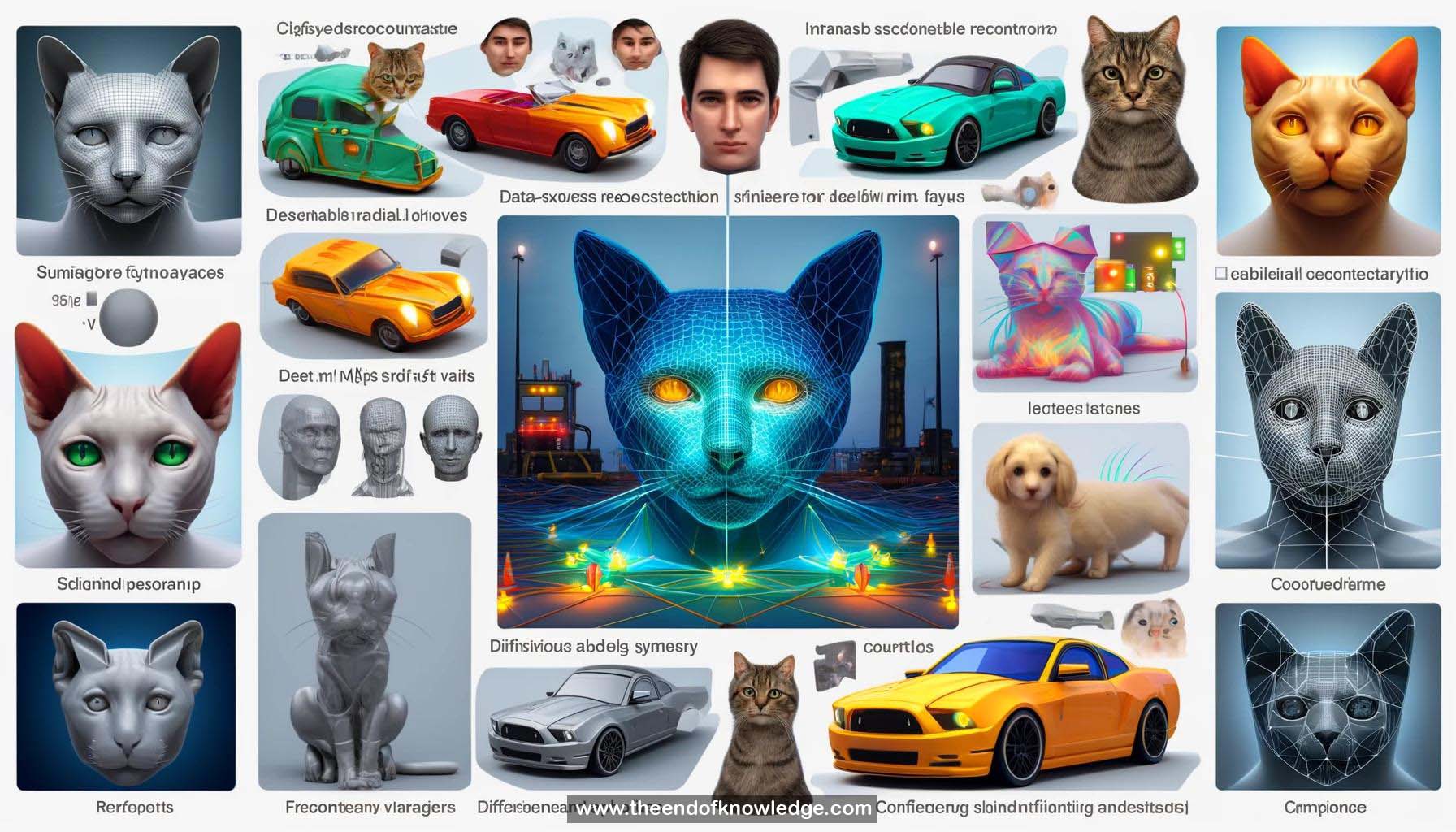

Concept Graph & Resume using Claude 3 Opus | Chat GPT4o | Llama 3:

Resume:

1.- Learning deformable 3D objects from single-view images without manual annotations or additional supervision.

2.- Training only requires a set of single-view images of a certain object category.

3.- After training, the model predicts instance-specific 3D shapes from a single input image.

4.- Many objects in the world, including animals and man-made objects, exhibit bilateral symmetry.

5.- Photogeometric autoencoding pipeline disentangles 3D shape (depth map), pose, and texture from an input image.

6.- The pipeline is trained with reconstruction loss using a differentiable renderer.

7.- Symmetry is used to avoid degenerate solutions by enforcing prediction of a symmetric view of the object.

8.- Canonical predictions are flipped to obtain two reconstructions, minimizing both reconstructions simultaneously.

9.- Lighting and intrinsic albedo are separated, enforcing symmetry only on the albedo to handle asymmetric lighting.

10.- Asymmetries in albedo or deformed shapes are accounted for using uncertainty modeling with confidence maps.

11.- The model learns strong priors on human faces and generalizes well to abstract faces, including drawings and emojis.

12.- The trained model can be applied to video frames without fine-tuning.

13.- Objects can be easily relit with different lighting conditions due to the intrinsic albedo and lighting decomposition.

14.- The model was also trained on cat faces, which wouldn't be possible with methods requiring additional supervision.

15.- Symmetric canonical views allow for easy rendering of the symmetry plane on input images.

16.- Asymmetries modeled by the confidence model can be visualized.

17.- Ablation studies demonstrate the importance of symmetry constraints on both albedo and depth.

18.- Predicting shading from directional light helps avoid bumpy shapes and utilizes shading cues.

19.- Confidence maps effectively model asymmetries, as demonstrated by experiments with asymmetric perturbations on images.

20.- The unsupervised method learns deformable 3D objects from single-view images using symmetry and shading as geometric cues.

21.- Intrinsic image decomposition is achieved without supervision.

22.- A web demo is available for users to try the model with their own faces or cats.

23.- The code is available online for reproducibility and further research.

24.- CVPR Live Q&A sessions are scheduled to address questions and discuss the work.

25.- The presentation concludes with a summary of the key contributions and an invitation to explore the web demo and code.

Knowledge Vault built byDavid Vivancos 2024